Adapting Modern Identity Security in an AI-Driven World

The most critical control layer in modern enterprise environments

Identity has become the most critical control layer in modern enterprise environments. Not because it is inherently weak, but because it connects everything.

Applications, infrastructure, data, automation, and increasingly AI-driven systems all rely on identity to function. That centrality has made identity the preferred attack surface for adversaries. Credential theft, lateral movement, and account takeover continue to succeed not because organizations lack security tools, but because identity threats rarely look like threats at all.

At the same time, the way organizations secure identity has not evolved at the same pace.

Most identity strategies still rely on a familiar model. Define access. Review it periodically. Enforce policies. Move on.

That model was built for a different environment.

Today, identity is fluid. It is reused across systems. It is embedded in automation. It is extended to non-human identities. And increasingly, it is delegated to systems and AI agents that can act autonomously.

In this context, visibility into access alone is no longer sufficient.

Identity security requires a dynamic visibility and detection layer. One that reflects how identity is actually used, how it changes over time, and how it can be misused without breaking anything.

Identity Does Not Fail Loudly

In most areas of security, failure is visible.

A vulnerability is exploited. A system crashes. A process behaves unexpectedly.

Identity works differently.

When identity is compromised, systems often continue to function as expected. Access is valid. Actions are permitted. Nothing appears broken.

This is what makes identity-based attacks difficult to detect early. An attacker operating with legitimate credentials does not need to evade controls. They operate within them.

This is also why traditional detection approaches struggle. Alerts are designed to capture anomalies, not subtle misuse of legitimate access.

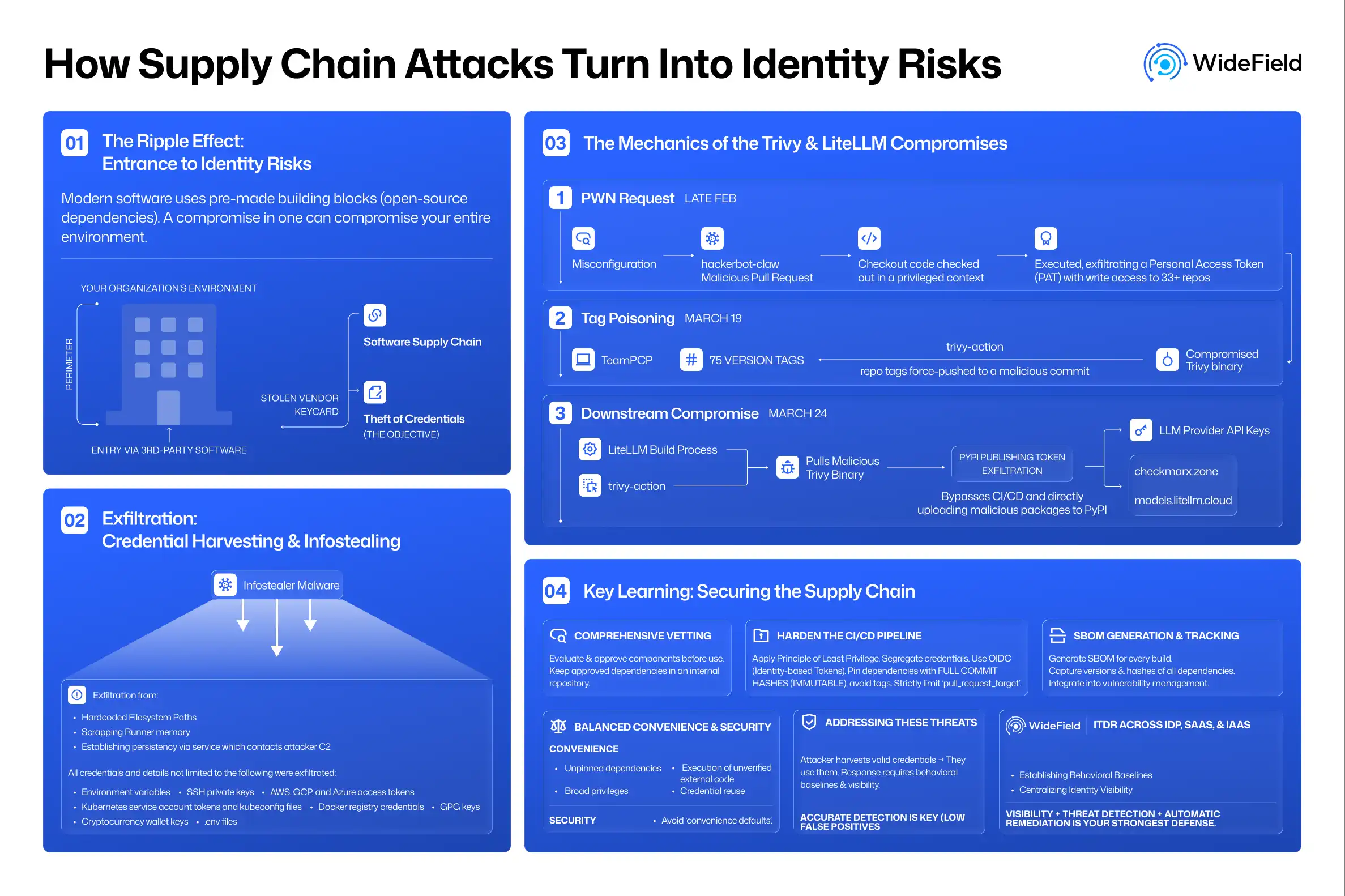

Understanding identity risk requires observing patterns over time, not just isolated events. As the CISA Binding Operational Directive 25-01 framework illustrates, even well-enforced security configurations can be bypassed, which is why posture management and active threat detection must work together. Our analysis of what that directive gets right and where it falls short is worth reading.

The Limits of Logs and Access Reviews

Organizations often assume that if identity activity is logged, it can be understood.

In practice, logs provide signals, not meaning.

They capture authentication events, token usage, API calls, and permission changes. But they do not explain whether that activity aligns with expected behavior.

Similarly, access reviews validate configuration at a point in time. They answer whether access should exist. They do not explain how that access is actually used.

A service account may have the correct permissions. A user may pass every certification review. A token may be issued through the correct process.

Yet over time, usage can drift.

- Frequency increases.

- Scope expands.

- Relationships change.

None of these shifts necessarily trigger alerts. But they change the risk posture.

This is where many identity threats begin; not with a loud breach, but with quiet privilege drift that accumulates invisibly across the identity fabric. This gap is the reason the Identity Security Posture Management (ISPM) category was created to surface misconfigured policies, excessive permissions, and dormant accounts before they become attack paths.

Non-Human Identities and Invisible Privilege

The growth of non-human identities has fundamentally changed the identity landscape.

AI agents, service accounts, APIs, automation scripts, and machine identities now outnumber human users by over 144 to 1. According to the Verizon Data Breach Investigations Report, the majority of breaches originate from compromised identities, and an increasing share of those involve credentials tied to non-human accounts that lack governance rigor.

These identities often operate continuously. They do not log in the same way humans do. They do not follow predictable patterns. And they are rarely monitored with the same rigor.

They also tend to accumulate privilege over time. A service account created for a specific task may later be reused across workflows. Permissions are added for convenience. Least privilege is abandoned. Ownership becomes unclear.

Over time, these identities become deeply embedded in the environment. They are trusted, but not fully understood.

This creates a layer of invisible privilege that traditional identity governance and privileged access management (PAM) models were not designed to manage. The connected application layer compounds this further: OAuth apps granted delegated permissions can quietly go rogue long after the original consent was given. We explore the detection mechanics in depth in Rogue and Malicious OAuth Apps: Detecting Behavioral Drift in Applications.

Identity in an AI and Agentic World

The next shift is already underway.

AI systems and agentic workflows are beginning to act on behalf of users and services. They make decisions, trigger actions, and interact with systems without direct human involvement. Tools like OpenClaw illustrate this clearly; autonomous agents that operate across enterprise applications leave identity breadcrumbs across OAuth grants, API tokens, and service principals, often on unmanaged devices that endpoint detection never sees. The identity-first approach to detecting this kind of agentic activity is covered in OpenClaw: Beyond Endpoint Detection, Think Identity Security.

This introduces a new dimension to identity.

Identity is no longer just assigned. It is delegated. It is not only used. It acts.

In these environments, traditional assumptions break down.

- Who owns the identity of an agent?

- How is intent defined?

- What does normal behavior look like for an autonomous workload?

These are not theoretical questions. They are emerging operational challenges that require new thinking about AI governance and machine identity management.

As agentic systems scale, identity security will need to move from controlling access to overseeing behavior. Without that shift, organizations risk extending trust faster than they can understand it.

Detection Is Not the Same as Understanding

One of the most important distinctions in modern identity security is the difference between detection and understanding.

Detection identifies events that match predefined conditions. Understanding requires context.

It requires knowing how an identity typically behaves, how it interacts with other systems, and how those patterns evolve.

For example:

- A spike in API usage may be legitimate — or it may indicate misuse of a service account.

- A token request may follow expected patterns — or it may represent the beginning of unauthorized automation.

- An OAuth application may appear healthy in a posture check — and still be silently exfiltrating data.

Without context, all of these scenarios can look identical.

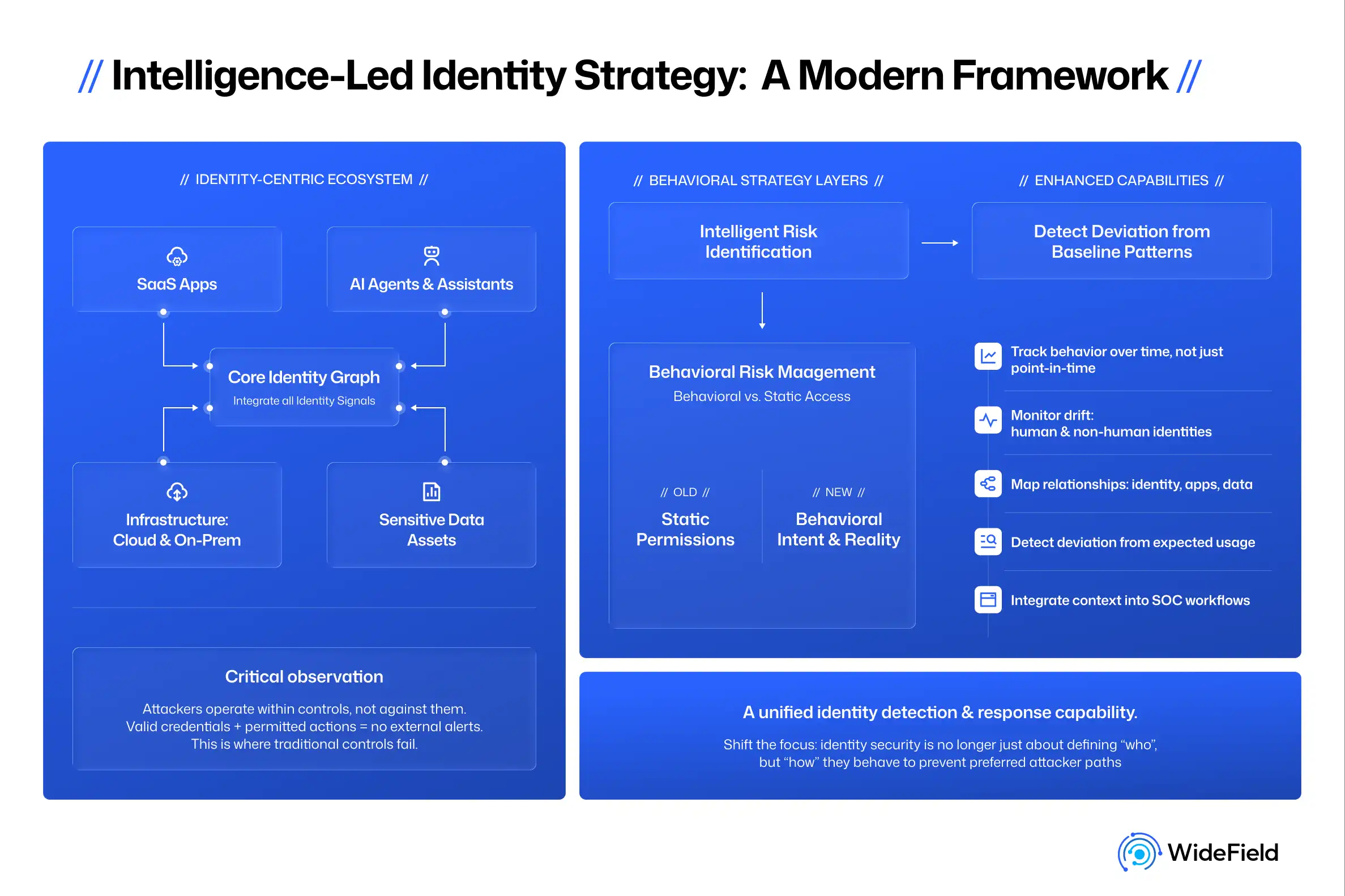

This is why identity security needs to become more intelligence-led. Not in the sense of adding more alerts, but in the sense of building a deeper behavioral model of how every identity — human and non-human — actually operates over time.

The challenge with ITDR as it has been practiced is that generic SIEM and XDR platforms were never built to carry this kind of identity context. As we argue in ITDR is Dead. Long Live ITDR., the practice model has reached its limits — not because identity detection doesn't matter, but because identity is a deeply stateful system that requires purpose-built visibility to reason about correctly.

Toward an Intelligence-Led Identity Strategy

To address these challenges, organizations need to rethink their identity security.

This does not mean abandoning governance or access control. Those remain foundational.

It means complementing them with visibility and detection that reflects real-world usage.

Key areas to focus on include:

- Tracking identity behavior over time rather than relying on point-in-time validation

- Monitoring privilege drift across both human and non-human identities

- Understanding relationships between identities, applications, and data

- Identifying deviations from expected usage patterns

- Integrating identity context into broader security operations workflows

This approach aligns closely with emerging practices in Identity Threat Detection and Response (ITDR) and Identity Security Posture Management (ISPM) — as well as newer frameworks like Identity Visibility and Intelligence Platforms (IVIP). Rather than treating identity as a static configuration, these approaches treat it as a dynamic system requiring continuous observation. But as we examine in ITDR, ISPM, and IVIP: Do We Really Need More Identity Categories or Do We Need Better Outcomes?, the real question is not which acronym wins — it is whether these capabilities converge into a coherent strategy or fragment into yet another layer of disconnected tooling.

Prevention is critical. Detection is mandatory. CISA's IAM guidance makes clear that configuration hardening alone is insufficient without complementary runtime detection. NIST digital identity guidelines (SP 800-63) provide the underlying framework for thinking about identity assurance levels, but they must be paired with behavioral intelligence to be actionable in modern environments.

What This Means for Security Leaders in 2026

The shift toward identity-centric attacks is not temporary. It reflects a deeper change in how systems are built and how attackers operate.

For security leaders, this creates a clear mandate.

Identity security can no longer be evaluated solely through access reviews, policy enforcement, or compliance metrics.

It must be evaluated through understanding.

- Understanding how identity behaves.

- Knowing when trust is established and when it is extended.

- Modeling how trust can be misused without triggering immediate alarms.

Organizations that develop this level of intelligence will be better positioned to detect identity threats early and respond effectively. Those who rely solely on static models will continue to operate with blind spots, and attackers will continue to operate comfortably within them.

Identity security is not about adding another layer of control.

It is about developing visibility and detection that matches how identity actually functions in modern environments.

Access defines intent. Behavior reveals reality.

As automation increases and AI systems take on more responsibility, that distinction will only become more important.

The future of identity security will not be defined by who has access.

It will be defined by who understands how identity behaves.